YOLO-Based Water Cannon Auto-Aiming System

Dec 12, 2024 — Jun 30, 2026

·

2 min read

Introduction

The YOLO-Based Water Cannon Auto-Aiming System is an intelligent robotic platform for autonomous target detection, tracking, and precision striking. Developed under Sun Yat-sen University Innovation Training Program (Project No. 20251983), it integrates YOLO object detection, ROS2 distributed architecture, and adaptive control for dynamic target engagement in aquatic environments.

Hardware Platform

Dual-Hull Unmanned Boat Design

- Structure: High-strength catamaran with reinforced design for complex water conditions and equipment loads, utilizing dual underwater thrusters for differential control and dual surface ducted propulsion systems.

- Propulsion: Dual ducted surface propellers for auxiliary power and enhanced stability against waves and recoil forces.

- Extensibility: Modular layout with integrated battery packs, NUC computing cores, and expansion interfaces, providing ample space for high-power water pumps and shooting systems.

Hardware Configuration

- Mainboard: NVIDIA Jetson Xavier NX Super for GPU inference.

- Servo Gimbal: Fashionrobo intelligent servos with serial communication and PID control.

- Camera: Nuwa HP60C depth cameras for 3D perception and distance measurement.

Innovation Points

Dual-Hull Boat Structure and Multi-Propulsion Coordination

- Design: Catamaran with dual underwater thrusters for differential control and dual surface ducted propulsion, achieving high maneuverability and stability.

- Advantages: Enhances lateral stability against waves and recoil, with space for high-power water pumps.

Modular ROS2 Architecture

- Framework: Node-based architecture separating perception, decision-making, and execution for asynchronous communication and concurrent processing.

- Benefits: Improves performance, scalability, and maintainability.

Adaptive Control Algorithms

- PID Integration: PID control with auto-tuning for servo motors, including anti-windup, low-pass filtering, and dead-zone compensation to reduce power consumption and eliminate oscillations.

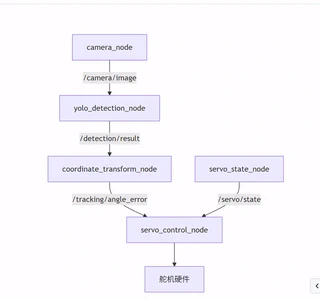

System Architecture

Built on ROS2, divided into three layers:

Perception Layer

- Camera Monitor Node: Handles USB/depth camera inputs, publishes image streams to

/camera/image. - Object Detection Node: Uses YOLOv8 for real-time detection, publishes bounding boxes and confidence scores to

/detection/result.

Decision Layer

- Tracking Calculation Node: Transforms pixel coordinates to angular errors using camera FOV parameters.

Execution Layer

- Servo Control Node: Implements adaptive PID controllers with auto-tuners for precise actuation.

- Servo Monitor Node: Monitors voltage, temperature, and position for safety.

Integration

- Launch Package: One-click startup with configurable parameters for depth camera integration.

Key Achievements

Python Monolithic System (v1.1.8 / AIM V1.0)

- Software: “AIM Intelligent Target Tracking System” with multi-threaded architecture, SimplePID controller, SimpleTargetTracker, and CoordinateConverter.

- Features: Intelligent degradation protection, auto-tuning, and monitoring.

Project Team

- Project Leader: Cu Disheng (Information Engineering, 2023)

- Team Members: Cen Dai, Liu Chaoyin, Mao Lishan (Information Engineering, 2023)

- Supervisor: Hou Yanqing (School of Systems Science and Engineering)

Authors

Undergraduate in Information Engineering, School of System Science and Engineering, Sun Yat-sen University

Possesses extensive practical experience in robotics and deep learning, having participated in several robotics-related projects in national-level competitions and secured awards. Currently engaged in research on robot locomotion and manipulation, with a keen interest in applying reinforcement learning to embodied AI and robotic control.